Week Introduction

Cybersecurity is not about hackers and firewalls. It is about systems under uncertainty — managing risk when you cannot predict every threat, control every user, or eliminate every vulnerability.

This week establishes the foundational lens: cybersecurity as a discipline of risk reasoning, systems thinking, and trade-off justification. Everything you learn for the next three years builds on this foundation.

Learning Outcomes (Week 1 Focus)

By the end of this week, you should be able to:

- LO1 - Systems Thinking: Explain cybersecurity problems as properties of systems, not isolated technical failures

- LO2 - Asset & Risk Reasoning: Identify what needs protection and why, using the CIA triad and risk formula (Threat × Vulnerability × Impact)

- LO3 - Framework Application: Map security activities to the NIST Cybersecurity Framework's five functions (Identify, Protect, Detect, Respond, Recover)

- LO4 - Professional Ethics: Explain ethical responsibilities in cybersecurity, including responsible disclosure and legal boundaries

- LO5 - Risk Communication: Translate technical security concepts into business language for non-technical stakeholders

- LO8 - Integration: Begin constructing a coherent security narrative (foundation for later synthesis)

Lesson 1.1 · Cybersecurity Is a Systems Problem

"Cybersecurity = preventing hackers from breaking in."

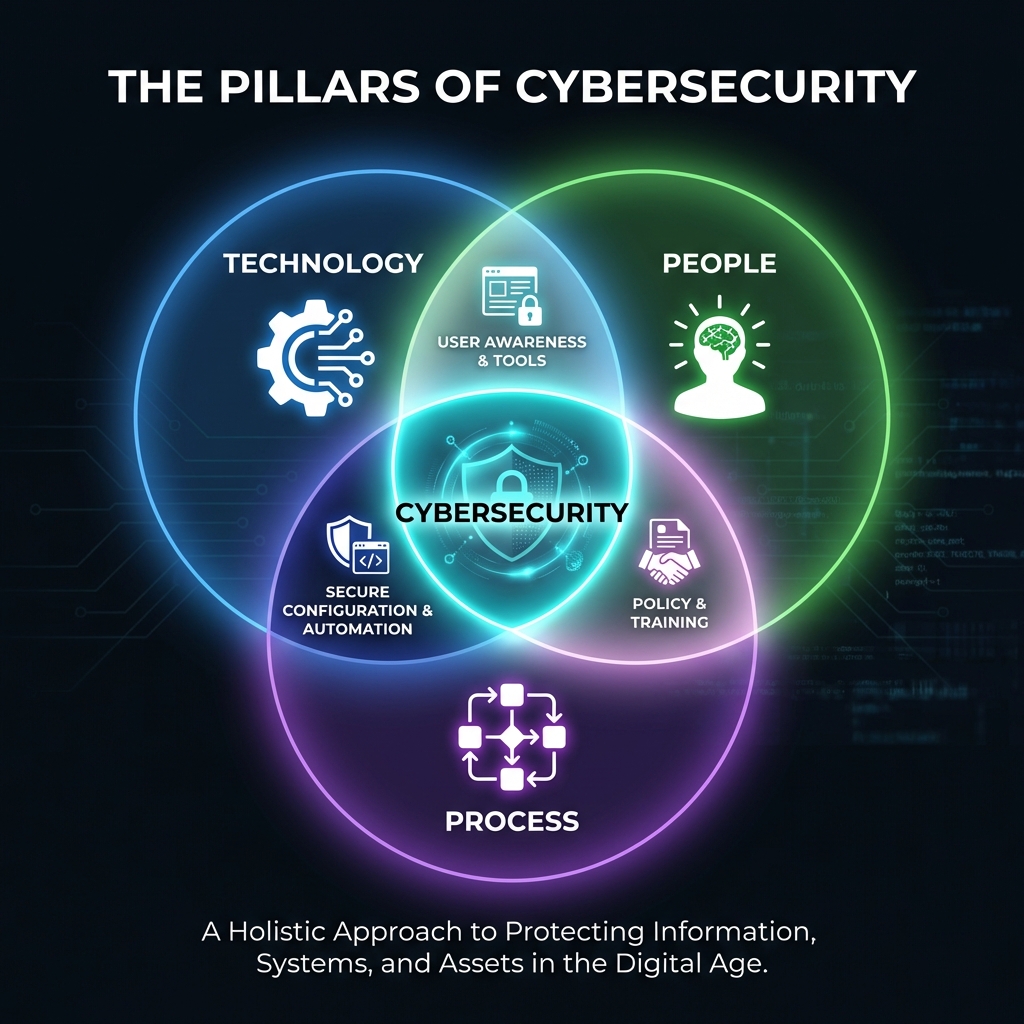

Reality: Cybersecurity is about protecting socio-technical systems — systems built by humans, operated by humans, and connected to other systems. Security failures emerge from design choices, not just attacker skill.

Example: When a hospital's patient records are exposed, the problem is rarely "the hacker was too good." It's usually: weak access controls (design), misconfigured databases (operation), or unpatched software (maintenance). The system was vulnerable by design.

Why this matters: If you think security is about technology alone, you'll miss the human, process, and organizational factors that create most vulnerabilities. Systems thinking means asking: "What assumptions does this system make? Where do they fail?"

Lesson 1.2 · The CIA Triad (Foundational Risk Model)

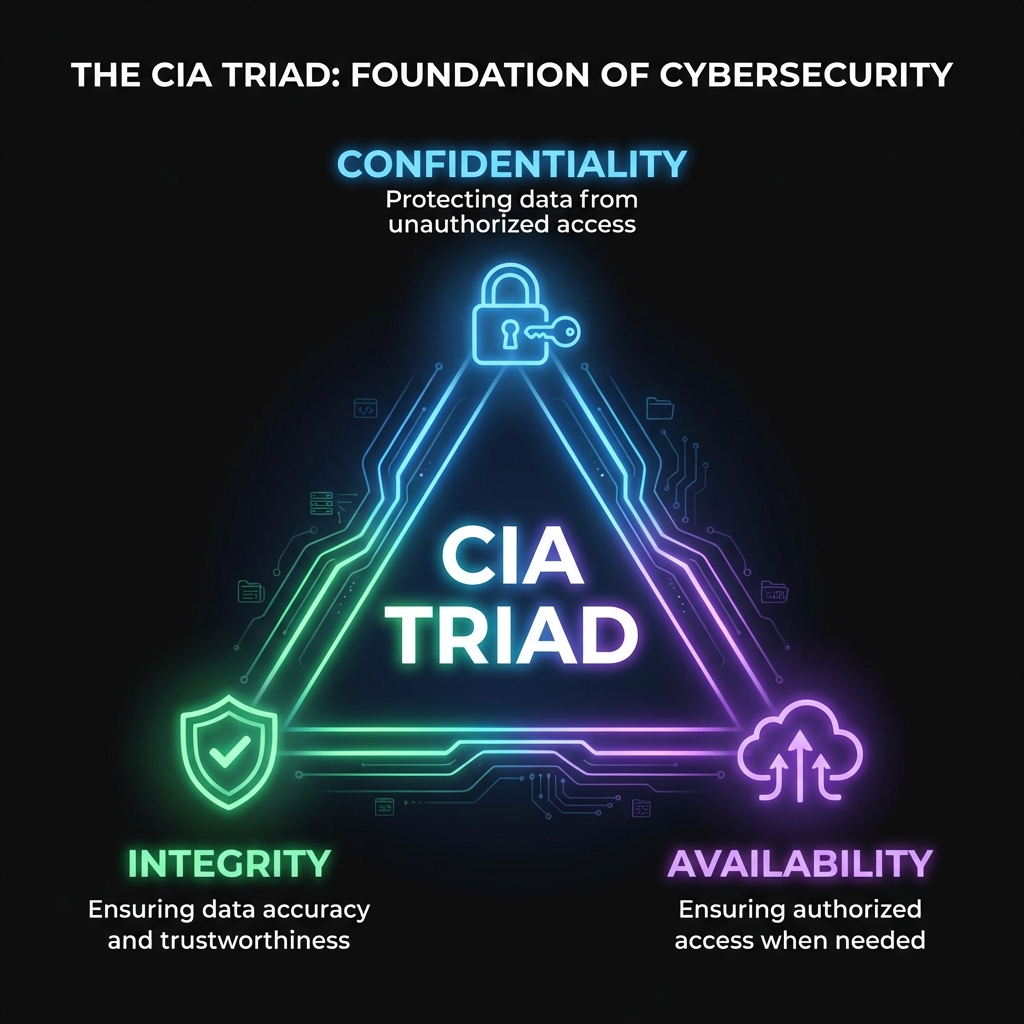

The CIA Triad is the oldest and most durable model in cybersecurity. It defines three fundamental properties that security aims to protect:

-

Confidentiality: Information is accessible only to those authorized to access

it.

Failure example: Patient medical records posted publicly due to misconfigured cloud storage. -

Integrity: Information and systems are accurate and have not been tampered

with.

Failure example: An attacker modifies transaction amounts in a banking system. -

Availability: Authorized users can access systems and data when needed.

Failure example: A ransomware attack encrypts hospital systems during an emergency.

These three properties are often in tension. Maximum security (confidentiality) can reduce availability. Perfect availability might compromise integrity checks. Your job is to balance these trade-offs based on context.

Lesson 1.3 · Perfect Security Does Not Exist (Trade-offs Are Inevitable)

The security trilemma: You cannot simultaneously maximize security, usability, and cost-effectiveness. Every system makes trade-offs.

Real-world examples:

- Multi-factor authentication (MFA): Increases security (harder to compromise) but reduces usability (more steps to log in). Organizations must decide: Is the friction worth the protection?

- Full disk encryption: Protects confidentiality if a laptop is stolen, but slightly reduces performance and complicates recovery if the user forgets their password.

- Open vs. closed networks: An air-gapped (isolated) network is very secure but sacrifices convenience and connectivity. A fully open network is convenient but exposes more attack surface.

Mature cybersecurity professionals don't seek "perfect security." They seek acceptable risk — reducing exposure to a level the organization can justify given its resources, mission, and threat environment.

Lesson 1.4 · Risk = Threat × Vulnerability × Impact

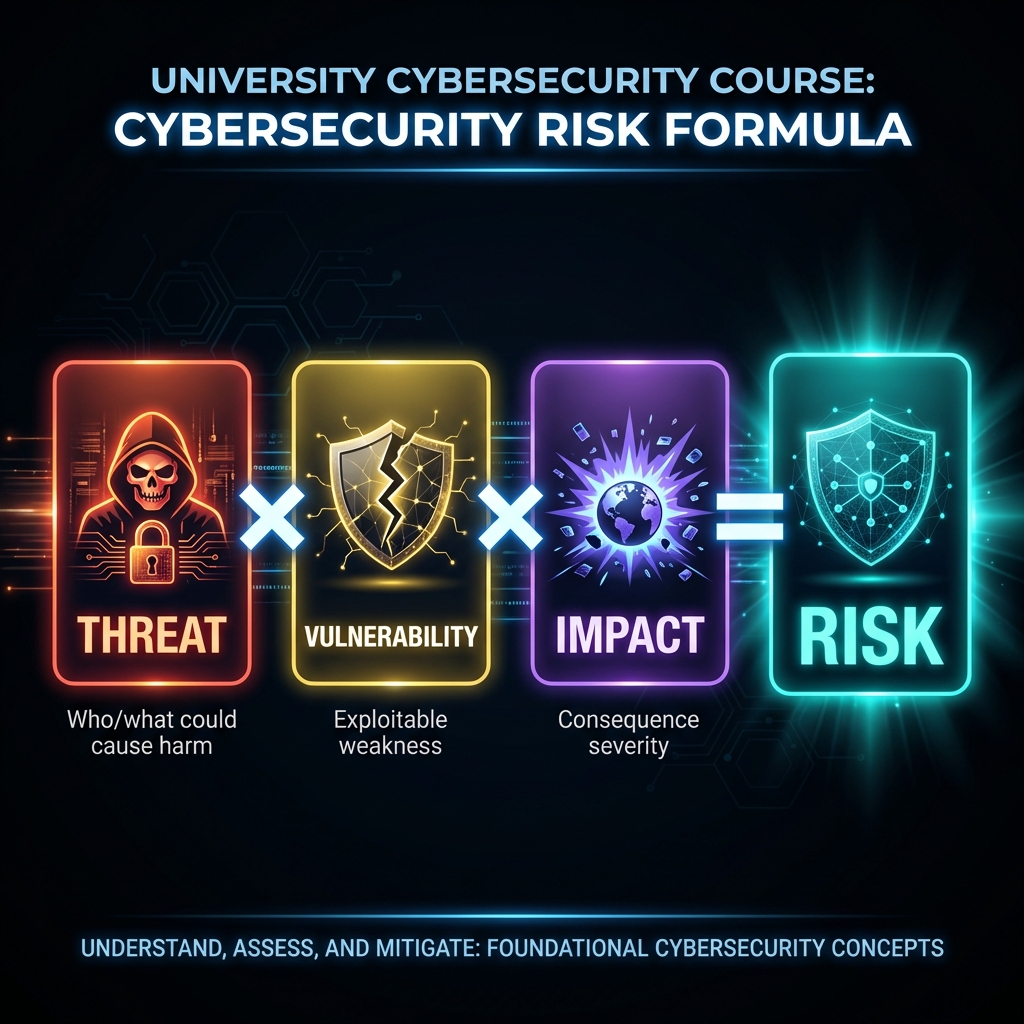

Risk is not binary (safe/unsafe). It is a function of three variables:

- Threat: What or who could cause harm? (Attackers, accidents, natural events)

- Vulnerability: What weakness could they exploit? (Bugs, misconfigurations, human error)

- Impact: How bad would the damage be? (Data loss, financial harm, reputational damage)

Example: A university learning management system (LMS) stores student grades.

- Threat: Motivated students (or external attackers) who want to change grades

- Vulnerability: Weak authentication (passwords only, no MFA) and insufficient access logging

- Impact: Grade tampering undermines academic integrity, potential legal consequences, loss of accreditation

Risk calculation: Even if the vulnerability exists, if there is no credible threat (e.g., the system is offline and air-gapped), risk is low. Conversely, even a small vulnerability becomes high-risk if the impact is catastrophic (e.g., medical device failure).

Lesson 1.5 · Why "Checklist Security" Fails

Trap for beginners: Thinking security is about following a checklist ("install antivirus, enable firewall, done").

Checklists assume static threats and universal solutions. Real systems are dynamic, contexts vary, and attackers adapt. A control that works for one organization might be useless (or harmful) for another.

Principle-based security — understand the "why" behind controls, then adapt them to your specific system, threats, and constraints.

Lesson 1.6 · The NIST Cybersecurity Framework (Your Professional Roadmap)

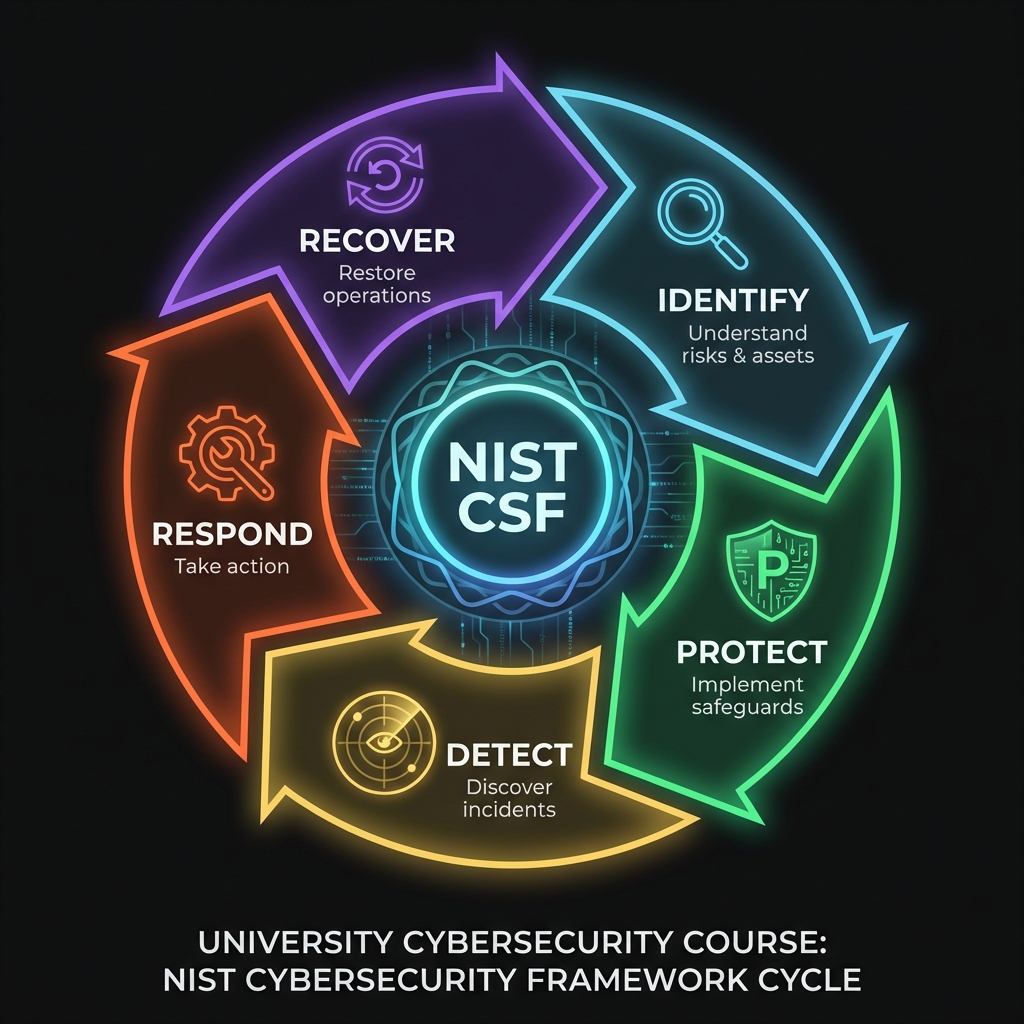

Why this matters: The NIST Cybersecurity Framework (CSF) is the most widely adopted security framework globally. Fortune 500 companies, government agencies, and security professionals use this structure to organize security programs. Understanding it now gives you a mental model you'll use for your entire career.

The Five Core Functions: NIST CSF organizes all security activities into five functions:

-

1. Identify: Understand your assets, risks, and vulnerabilities.

Question to ask: "What needs protection, and what are the risks?"

Example activities: Asset inventory, risk assessment, threat identification -

2. Protect: Implement controls to reduce risk.

Question to ask: "How do we prevent or reduce the likelihood of incidents?"

Example activities: Access controls, encryption, security training, network segmentation -

3. Detect: Discover security events when they occur.

Question to ask: "How quickly can we identify when something goes wrong?"

Example activities: Log monitoring, intrusion detection, anomaly detection -

4. Respond: Take action when incidents are detected.

Question to ask: "What do we do when an incident happens?"

Example activities: Incident response plans, containment, forensics, communication -

5. Recover: Restore systems and services after an incident.

Question to ask: "How do we return to normal operations and improve?"

Example activities: Backup restoration, business continuity, lessons learned

These five functions form a cycle, not a checklist. You continuously identify new risks, protect against them, detect incidents, respond to them, and recover while learning. This week's focus is primarily on Identify (understanding what you're protecting and why).

Connection to this week: The CIA Triad (Lesson 1.2) maps to the "Protect" function. The risk formula (Lesson 1.4) maps to the "Identify" function. You're already learning the framework—now you have professional vocabulary for it.

Lesson 1.7 · Professional Ethics in Cybersecurity

Why start with ethics? Cybersecurity professionals have extraordinary power: access to sensitive data, ability to bypass controls, knowledge of vulnerabilities. With this power comes ethical responsibility. Understanding professional ethics isn't optional—it's foundational.

Core Ethical Principles (ACM Code of Ethics):

- Do no harm: Your skills should protect, not exploit. Even in offensive security roles (penetration testing, red teams), you operate with explicit authorization and defined scope.

- Respect privacy: Just because you can access data doesn't mean you should. Access only what's necessary for your authorized role.

- Act professionally: Maintain confidentiality, disclose responsibly, honor agreements (NDAs, contracts, scope limits).

- Contribute to society: Use your knowledge to improve security for everyone, not just those who can pay. This includes responsible disclosure of vulnerabilities.

Real-world example - Responsible Disclosure: Imagine you discover a critical vulnerability in a popular website while practicing your skills. The ethical path:

- Do NOT exploit it for personal gain

- Do NOT publicly disclose it immediately (gives attackers a blueprint)

- Privately notify the organization with details to fix it

- Give them reasonable time to patch (typically 90 days)

- Only then (if appropriate) publish findings to help the community learn

Legal boundaries: "Testing" on systems you don't own or have permission to test is illegal in most jurisdictions (Computer Fraud and Abuse Act in the US, Computer Misuse Act in the UK). Always get explicit written authorization before security testing.

Professional certifications and ethics: Organizations like (ISC)², EC-Council, and ISACA require certified professionals to agree to codes of ethics. Violations can result in certification revocation and legal consequences.

Lesson 1.8 · Communicating Risk to Non-Technical Stakeholders

Essential skill: Technical security knowledge is only valuable if you can explain it to decision-makers who control budgets and priorities. Executives, board members, and business leaders rarely have deep technical backgrounds.

Common mistake: "We need to patch CVE-2024-12345 with CVSS score 9.8 affecting our Apache Struts servers because of remote code execution via OGNL injection."

"Our public-facing web servers have a critical vulnerability. Attackers could gain complete control of these systems and access customer data. This is the same type of vulnerability that led to the Equifax breach (143 million records exposed, $700M+ cost). We need to apply the security update this week. The risk is high, and the fix is available."

Framework for risk communication:

- What's at risk? (Customer data, revenue, reputation—not "the database")

- What could happen? (Data breach, system downtime, regulatory fines—concrete impacts)

- How likely is it? (High/Medium/Low with context, not just numbers)

- What do we do about it? (Clear recommendations with costs and timelines)

- What happens if we don't act? (Business consequences, not just technical)

Practice opportunity: In Lab 1, you identified assets and CIA properties. Practice explaining ONE of your findings to someone without a technical background (friend, family member). Can they understand the risk without knowing what SQL injection or buffer overflows are?

Self-Check Questions (Test Your Understanding)

Answer these in your own words (2-3 sentences each):

- What makes cybersecurity a "systems problem" rather than just a technology problem?

- Explain the CIA Triad and give one real-world example of each property failing.

- Why is "perfect security" impossible? Give one concrete trade-off example.

- In the risk formula (Threat × Vulnerability × Impact), why do all three factors matter? Can you have high risk with a low-impact event?

- What is the difference between a security checklist and principle-based security?

- NEW: Which of the five NIST CSF functions (Identify, Protect, Detect, Respond, Recover) is most relevant to the CIA Triad? Explain your reasoning.

- NEW: Why is responsible disclosure important? What could happen if you immediately published a critical vulnerability you discovered?

- NEW: Translate this technical statement for a non-technical executive: "We need to implement multi-factor authentication because password-only authentication has a high risk of credential theft."

Lab 1 · Systems Thinking: Mapping Assets, Boundaries, and Risk

Time estimate: 30-45 minutes

Objective: Apply systems thinking to a real system you use daily. By the end, you will identify what needs protection (assets), where risk exists (vulnerabilities), and what failure looks like (impact).

Step 1: Choose Your System (5 minutes)

Select one system from this list (or propose your own):

- University learning management system (LMS) where grades are stored

- Personal email account (Gmail, Outlook, etc.)

- Online banking app

- Workplace collaboration tool (Slack, Teams, etc.)

- Social media account (Instagram, LinkedIn, etc.)

Why it matters: You must deeply understand a system before you can secure it.

Step 2: Identify Assets (10 minutes)

List at least 3 assets the system protects. For each asset, note:

- What is it? (e.g., "student grades," "personal emails," "bank account balance")

- Who cares about it? (e.g., students, employers, account holder)

- Which CIA property matters most? (Confidentiality, Integrity, or Availability)

Example for LMS:

- Asset: Student grades

- Who cares: Students, employers, accreditation bodies

- Primary CIA property: Integrity (grades must be accurate and tamper-proof)

Step 3: Map Confidentiality Risks (10 minutes)

Answer these questions:

- What information should remain confidential (secret)?

- Who is authorized to access it?

- What would happen if this information was leaked publicly?

Example for email:

- Confidential info: Personal conversations, work documents, password reset links

- Authorized access: Only the account owner

- Leak impact: Privacy violation, identity theft risk, professional embarrassment

Step 4: Map Integrity Risks (10 minutes)

Answer these questions:

- What information must remain accurate and unmodified?

- Who is allowed to change it?

- What would happen if this information was tampered with?

Example for banking app:

- Must stay accurate: Account balance, transaction history

- Who can change: Only authorized bank systems (not users directly)

- Tampering impact: Financial fraud, incorrect balances, loss of trust

Step 5: Map Availability Risks (5 minutes)

Answer these questions:

- When must this system be accessible?

- What happens if it becomes unavailable?

Example for LMS:

- Must be available: During assignment deadlines, exam periods

- Unavailability impact: Students miss deadlines, exams are disrupted, academic integrity is questioned

Step 6: Synthesis (5 minutes)

Write a short paragraph (3-5 sentences) answering:

"What does cybersecurity mean for this system? Which CIA property is most critical, and why?"

Example answer for LMS:

Cybersecurity for the LMS primarily means protecting the integrity of student grades and academic records. While confidentiality (keeping grades private) and availability (access during exams) matter, the most critical concern is ensuring grades cannot be tampered with. If integrity fails, the entire academic credential system loses trust. Therefore, controls like audit logging, role-based access, and change verification are essential.

Success Criteria (What "Good" Looks Like)

Your lab is successful if you:

- ✅ Identified at least 3 distinct assets

- ✅ Explained which CIA property matters most for each asset (with justification)

- ✅ Described realistic failure scenarios (not hypothetical "could happen" — what would happen)

- ✅ Wrote a synthesis that shows systems thinking (not just a list of threats)

Extension (For Advanced Students)

If you finish early, explore these questions:

- What assumptions does this system make about its users? (e.g., "users will choose strong passwords")

- If one assumption fails, how does risk cascade? (e.g., weak passwords → credential theft → account takeover)

- What one control would reduce the most risk? Why?

📚 Building on Prior Knowledge

No prerequisites required for this course. This week establishes the foundation you'll reuse across the curriculum:

- CSY102 (Operating Systems): Apply CIA and asset thinking to OS hardening.

- CSY103 (Programming): Use risk framing to understand secure coding decisions.

- CSY104 (Networking): Map confidentiality and integrity to network protocols.

- CSY203/204/301: Use this risk language to communicate findings to stakeholders.

Hands-On Labs (Free)

Apply what you learned through practical exercises. Complete these before moving to reading resources.

🎮 TryHackMe: Introduction to Cyber Security

What you'll do: Interactive introduction covering the CIA triad, career paths,

and security principles through gamified challenges.

Why it matters: Hands-on practice reinforces concepts from this week's

lessons. You'll see real examples of confidentiality, integrity, and availability failures.

Prerequisites: Free TryHackMe account

Time estimate: 1 hour

🎮 TryHackMe: Intro to Defensive Security

What you'll do: Learn about blue team roles, Security Operations Centers (SOC),

and defensive security careers.

Why it matters: Complements offensive security with defensive mindset -

both are essential for systems thinking.

Prerequisites: Free TryHackMe account

Time estimate: 45 minutes

📝 Lab Exercise: CIA Triad Analysis

Task: Choose one system from Lab 1 (email, banking, LMS, etc.) and create a

threat matrix:

• Identify 3 confidentiality threats

• Identify 3 integrity threats

• Identify 3 availability threats

• For each: What's the attack? What's the impact? What's the mitigation?

Deliverable: 3x3 table (9 scenarios total)

Time estimate: 30 minutes

🆕 Lab Exercise: Map Assets to NIST CSF Functions

Task: Using the system you analyzed in Lab 1, map security activities to NIST

CSF's five functions:

• Identify: List 2 assets and their risks

• Protect: Name 2 controls that prevent incidents (e.g., MFA, encryption)

• Detect: How would you know if this system was compromised? (2 detection

methods)

• Respond: What would you do IMMEDIATELY if you detected an incident? (2

actions)

• Recover: How would you restore the system? (1-2 recovery steps)

Why it matters: This framework structures how professionals think about

security programs.

You'll use this in CSY303 (GRC) and CSY399 (Capstone).

Deliverable: Completed NIST CSF mapping table for your chosen system

Time estimate: 30-40 minutes

💡 Lab Tip: Create a free TryHackMe account at tryhackme.com to access all labs. Track your progress and earn badges!

Reading Resources (Free + Authoritative)

Deepen your understanding with these foundational readings. Complete after labs.

📘 NIST Cybersecurity Glossary

What to read: Look up definitions for: CIA Triad, Risk, Threat, Vulnerability,

Asset, Control.

Why it matters: Shared terminology is essential for professional

communication.

Time estimate: 15 minutes

📘 NIST Cybersecurity Framework (Overview Only)

What to read: The "Framework Overview" section (pages 1-8 of the PDF).

Why it matters: Introduces the five core functions: Identify, Protect,

Detect, Respond, Recover.

This is the structure you'll use throughout your career.

Time estimate: 20 minutes

📘 ACSC Essential Eight (Australia)

What to read: The "What are the Essential Eight?" overview page.

Why it matters: Real-world example of how governments prioritize security

controls.

Notice they focus on 8 high-impact mitigations, not 100 checkboxes.

Time estimate: 15 minutes

🎥 Optional: Computerphile - "How to Think Like a Hacker" (Video)

What to watch: First 10 minutes (systems thinking from an adversarial

perspective).

Why it matters: Complements your defender mindset by showing how attackers

view systems.

Time estimate: 10 minutes (optional)

Tip: Completion and XP persist via localStorage. If progress doesn't update immediately, refresh once.

📝 Week 01 Quiz

Test your understanding of NIST CSF, risk communication, and cybersecurity fundamentals.

Format: 10 multiple-choice questions · Passing score: 70% · Time: Untimed

Take QuizWeekly Reflection Prompt

Aligned to LO1 (Systems Thinking) and LO2 (Risk Reasoning)

Write 200-300 words answering this prompt:

Choose one system you analyzed in Lab 1. Explain why cybersecurity for that system is a systems problem (not just a technology problem).

In your answer, include:

- What assumptions the system makes (about users, technology, or environment)

- Where those assumptions could fail

- Why fixing the failure requires more than just "better technology"

What good looks like: You explain the interconnections (not just list threats). You show understanding that security emerges from design, not just defense.