Opening Framing: The Vulnerability Prioritization Problem

Vulnerability scanners find thousands of issues. Researchers publish new CVEs daily. Security teams face an overwhelming question: Which vulnerabilities should we fix first?

A "Critical" vulnerability in a test environment may be less urgent than a "Medium" vulnerability in your payment system. A vulnerability that's easy to exploit remotely is more dangerous than one requiring local access. How do we make these comparisons systematically?

Enter CVSS (Common Vulnerability Scoring System)—the industry-standard framework for assessing vulnerability severity. CVSS transforms subjective judgment into quantifiable metrics, enabling teams to prioritize remediation based on actual risk.

Key insight: CVSS is how security professionals communicate risk. A vulnerability scanner reports "CVSS 9.8"—you need to know what that means, how it was calculated, and whether it applies to YOUR environment. This week gives you that knowledge.

Lesson 11.1 · What Is CVSS?

CVSS (Common Vulnerability Scoring System) is a free, open framework for communicating the characteristics and severity of software vulnerabilities. Developed by FIRST (Forum of Incident Response and Security Teams), CVSS is used by:

- NIST NVD: National Vulnerability Database scores every CVE

- Vendors: Red Hat, Microsoft, Cisco publish CVSS scores in advisories

- Security Teams: Prioritize patching based on CVSS ratings

- Compliance Frameworks: PCI-DSS requires fixing high-CVSS vulnerabilities

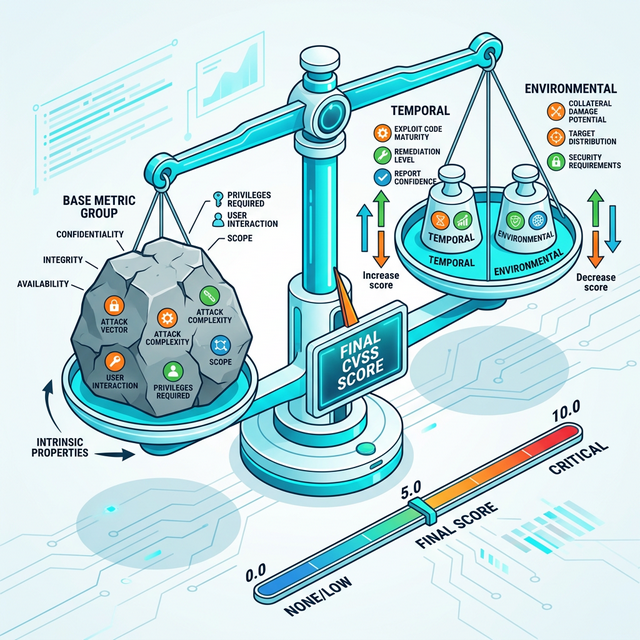

The Three Metric Groups

CVSS v3.1 (current version) calculates scores using three metric groups:

1. Base Metrics (Required)

These describe the intrinsic characteristics of the vulnerability that don't change over time or across environments. CVSS scores you see published (e.g., "9.8") are Base Scores.

Base Score Components:

- Exploitability Metrics:

• Attack Vector (AV): Network, Adjacent, Local, Physical

• Attack Complexity (AC): Low, High

• Privileges Required (PR): None, Low, High

• User Interaction (UI): None, Required

- Impact Metrics:

• Confidentiality Impact (C): None, Low, High

• Integrity Impact (I): None, Low, High

• Availability Impact (A): None, Low, High

- Scope (S): Unchanged, Changed

(Can the vulnerability affect resources beyond its security scope?)2. Temporal Metrics (Optional)

These change over time as exploits mature, patches are released, and workarounds are discovered.

Temporal Score Components:

- Exploit Code Maturity (E): Not Defined, Unproven, Proof-of-Concept, Functional, High

- Remediation Level (RL): Not Defined, Official Fix, Temporary Fix, Workaround, Unavailable

- Report Confidence (RC): Not Defined, Unknown, Reasonable, Confirmed3. Environmental Metrics (Optional)

These customize the score for YOUR specific environment. A critical web server vulnerability has different impact than the same vulnerability on a test system.

Environmental Score Components:

- Security Requirements (CR, IR, AR):

• How important is Confidentiality/Integrity/Availability to your organization?

• Not Defined, Low, Medium, High

- Modified Base Metrics:

• Allows you to adjust Base metrics for your environment

• Example: "Network" attack might require VPN in your environmentCVSS Score Ranges

CVSS scores range from 0.0 to 10.0, with severity ratings:

| Rating | CVSS Score | Typical SLA (Patch Deadline) |

|---|---|---|

| Critical | 9.0 - 10.0 | 7-15 days (emergency patching) |

| High | 7.0 - 8.9 | 30 days |

| Medium | 4.0 - 6.9 | 60-90 days |

| Low | 0.1 - 3.9 | Next maintenance window |

Important: CVSS is a severity metric, not a risk metric. A CVSS 9.8 vulnerability on an isolated test system has lower risk than a CVSS 6.5 on your production payment gateway. You must combine CVSS with business context.

Lesson 11.2 · Base Metrics Deep Dive

Let's understand each Base Metric with real-world examples.

Attack Vector (AV)

How can the attacker reach the vulnerable component?

Network (AV:N) - Highest severity

The vulnerability is exploitable remotely over the network.

Examples:

- SQL injection in web application (anyone on internet can exploit)

- Unauthenticated RCE in network service

- Cross-site scripting (XSS)

Impact: Broadest attack surface

Adjacent (AV:A) - High severity

Attacker must be on the same network segment.

Examples:

- ARP spoofing on local network

- Bluetooth vulnerabilities

- Wi-Fi WPA2 KRACK attack

Impact: Requires proximity (same building, Wi-Fi range)

Local (AV:L) - Medium severity

Attacker needs local access to the system.

Examples:

- Privilege escalation requiring shell access

- USB-based attacks

- DLL hijacking on local system

Impact: Requires existing compromise or physical access

Physical (AV:P) - Lowest severity

Attacker needs physical interaction with the device.

Examples:

- Cold boot attacks requiring RAM access

- Hardware implants

- BIOS password bypass

Impact: Requires physical presenceAttack Complexity (AC)

How difficult is it to exploit? Does it require special conditions?

Low (AC:L) - Higher severity

Attacker can exploit reliably without special preparations.

Examples:

- Default credentials that always work

- Buffer overflow with public exploit

- Missing authentication check

Characteristics: Repeatable, doesn't require race conditions or timing

High (AC:H) - Lower severity

Successful exploitation requires special conditions.

Examples:

- Race condition requiring precise timing

- Exploiting specific configurations only

- Requires winning a timing window

- Hash collision requiring specific input

Characteristics: May fail, requires expertise or luckPrivileges Required (PR)

What level of access does the attacker need before exploitation?

None (PR:N) - Highest severity

No authentication required.

Examples:

- Unauthenticated SQL injection

- Public API without authentication

- Anonymous FTP write access

Low (PR:L) - Medium severity

Requires basic user-level credentials.

Examples:

- Authenticated user can escalate to admin

- Standard user can access other users' data

- Registered account can execute code

High (PR:H) - Lowest severity

Requires administrative or significant privileges.

Examples:

- Admin can escape container (still a bug, but requires admin first)

- Root-level privilege escalation

Impact: Limited audience (fewer attackers have admin access)User Interaction (UI)

Does exploitation require a victim to take action?

None (UI:N) - Higher severity

Exploitation is automatic, no victim action required.

Examples:

- Worm that spreads without clicking

- Drive-by download vulnerability

- Server-side vulnerabilities

Characteristics: Fully automated exploitation

Required (UI:R) - Lower severity

Victim must perform an action (click link, open file, etc.).

Examples:

- Phishing link leading to XSS

- Malicious PDF requiring user to open

- Excel macro requiring user to enable

Characteristics: Requires social engineeringScope (S)

Can the vulnerability affect resources beyond its security scope?

Unchanged (S:U)

Impacts are confined to the vulnerable component.

Example: XSS that steals cookies from same application

Changed (S:C)

Impacts extend beyond the vulnerable component.

Example: Container escape affecting host OS

Example: SSRF accessing internal network from web app

Characteristics: Crosses security boundaries (trust zones, privilege levels)Impact Metrics (Confidentiality, Integrity, Availability)

High Impact (H)

- Confidentiality: All information disclosed

- Integrity: Complete system compromise

- Availability: Total shutdown

Low Impact (L)

- Confidentiality: Some information disclosed

- Integrity: Limited modification capability

- Availability: Performance degradation

None Impact (N)

- No impact to that specific security propertyLesson 11.3 · Calculating CVSS Scores (Real Examples)

Let's calculate CVSS scores for real vulnerabilities to see how it works.

Example 1: Heartbleed (CVE-2014-0160)

Vulnerability: OpenSSL buffer over-read allowing remote memory disclosure.

Base Metrics Analysis:

- AV:N (Network - exploitable over HTTPS)

- AC:L (Low - reliable exploitation)

- PR:N (None - no authentication required)

- UI:N (None - fully automated)

- S:U (Unchanged - stays within OpenSSL scope)

- C:H (High - can steal private keys, passwords)

- I:N (None - doesn't modify data)

- A:N (None - doesn't crash service)

CVSS Vector String: CVSS:3.1/AV:N/AC:L/PR:N/UI:N/S:U/C:H/I:N/A:N

Base Score: 7.5 (HIGH)

Why only 7.5 despite severity?

- No integrity impact (read-only)

- No availability impact (doesn't crash)

- Scope unchanged

But Heartbleed's REAL impact:

- Could steal TLS private keys

- Required mass certificate revocation

- Environmental score would be 9.0+ for critical systemsExample 2: Eternal Blue / WannaCry (CVE-2017-0144)

Vulnerability: Windows SMB remote code execution (RCE).

Base Metrics Analysis:

- AV:N (Network - exploitable over SMB port 445)

- AC:L (Low - reliable exploit, no race conditions)

- PR:N (None - unauthenticated RCE)

- UI:N (None - wormable)

- S:U (Unchanged - compromises the target system)

- C:H (High - full file system access)

- I:H (High - can modify/delete files)

- A:H (High - can shut down system)

CVSS Vector String: CVSS:3.1/AV:N/AC:L/PR:N/UI:N/S:U/C:H/I:H/A:H

Base Score: 9.8 (CRITICAL)

Why 9.8?

- Maximum exploitability (AV:N, AC:L, PR:N, UI:N)

- Maximum impact (C:H, I:H, A:H)

- Wormable (led to WannaCry ransomware outbreak)Example 3: Privilege Escalation (Example)

Vulnerability: Linux sudo privilege escalation requiring local user.

Base Metrics Analysis:

- AV:L (Local - requires shell access)

- AC:L (Low - works reliably)

- PR:L (Low - requires standard user account)

- UI:N (None - automated)

- S:U (Unchanged - escalates within same system)

- C:H (High - root access = full file access)

- I:H (High - can modify system)

- A:H (High - can crash system)

CVSS Vector String: CVSS:3.1/AV:L/AC:L/PR:L/UI:N/S:U/C:H/I:H/A:H

Base Score: 7.8 (HIGH)

Why not Critical?

- Requires local access (AV:L)

- Requires existing user account (PR:L)

- Still HIGH because once you're in, full compromiseExample 4: Reflected XSS (Typical Web Vuln)

Vulnerability: Reflected XSS in web application.

Base Metrics Analysis:

- AV:N (Network - web-based)

- AC:L (Low - works reliably)

- PR:N (None - unauthenticated)

- UI:R (Required - victim must click malicious link)

- S:C (Changed - JavaScript runs in victim's browser context, different origin)

- C:L (Low - can steal session cookies, not full database)

- I:L (Low - can modify page content, not backend)

- A:N (None - doesn't affect availability)

CVSS Vector String: CVSS:3.1/AV:N/AC:L/PR:N/UI:R/S:C/C:L/I:L/A:N

Base Score: 6.1 (MEDIUM)

Why only Medium?

- Requires user interaction (UI:R)

- Limited impact (L/L/N)

However, context matters:

- XSS on banking site = Environmental score 8.0+

- XSS on personal blog = Maybe stay at 6.1Lesson 11.4 · Temporal & Environmental Scoring

Base scores tell part of the story. Temporal and Environmental metrics complete it.

Temporal Metrics: Threat Maturity

Temporal metrics adjust the score based on the current state of exploits and remediation.

Exploit Code Maturity (E)

Unproven (E:U): Theoretical exploit, no working code

Proof-of-Concept (E:P): PoC exploit exists but unreliable

Functional (E:F): Reliable exploit code available

High (E:H): Automated exploit tools (Metasploit module)

Example:

CVE published with CVSS 9.0, but no exploit code → E:U

Temporal Score: ~8.0 (lower urgency)

1 week later: Metasploit module released → E:H

Temporal Score: 9.0 (maximum urgency!)Remediation Level (RL)

Official Fix (RL:O): Vendor released patch

Temporary Fix (RL:T): Workaround available

Workaround (RL:W): Mitigation possible via config

Unavailable (RL:U): No fix available (zero-day)

Example:

Zero-day with no patch → RL:U

Temporal Score: Maximum (no defense available)

After vendor patch release → RL:O

Temporal Score: Reduced (patch is now available)Environmental Metrics: Your Organization's Context

This is where CVSS becomes truly powerful. Customize scores for YOUR environment.

Security Requirements (CR/IR/AR)

For Confidentiality/Integrity/Availability:

- Low (X:L): Not critical to business

- Medium (X:M): Moderately important

- High (X:H): Mission-critical

Example: SQL Injection (Base 9.0)

Scenario A: Test Database

- CR:L (test data, not confidential)

- IR:L (test data, integrity doesn't matter)

- AR:L (downtime acceptable)

Environmental Score: ~6.5

Scenario B: Production Payment Database

- CR:H (credit cards = massive breach cost)

- IR:H (transaction integrity critical)

- AR:H (downtime = lost revenue)

Environmental Score: 10.0

SAME VULNERABILITY, DIFFERENT RISK!Real-World Decision Making

How security teams actually use CVSS:

Step 1: Scanner reports 500 vulnerabilities

Step 2: Filter by Base Score ≥ 7.0 (Critical/High only)

→ Reduces to 150 vulnerabilities

Step 3: Apply Temporal metrics

- Has exploit code? (E:F or E:H) → Priority 1

- Patch available? (RL:O) → Can fix now

→ Reduces to 80 urgent vulnerabilities

Step 4: Apply Environmental metrics

- Internet-facing system? → CR/IR/AR = High

- Internal dev system? → CR/IR/AR = Low

→ Identifies 25 critical fixes for THIS WEEK

Step 5: Patch in priority order:

1. Internet-facing, CVSS 9.0+, active exploits

2. Internet-facing, CVSS 7.0-8.9

3. Internal critical systems, CVSS 9.0+

4. Everything else in next maintenance windowLesson 11.5 · CVSS Limitations & Real-World Usage

CVSS is powerful but not perfect. Understand its limitations to use it effectively.

Known Limitations of CVSS

1. CVSS Doesn't Measure Risk (Only Severity)

CVSS measures: How bad is the vulnerability technically?

CVSS does NOT measure:

- Likelihood of exploitation

- Business impact

- Asset criticality

- Threat actor motivation

- Compensating controls in place

Example Problem:

CVSS 9.8 RCE in legacy system that's air-gapped

→ CVSS says "Critical"

→ Risk says "Low" (no network access)

Lesson: CVSS + Exposure + Business Impact = Risk2. Base Scores Often Ignore Context

NVD publishes Base scores only

→ Everyone sees "9.8 Critical"

→ But your firewall might block the attack vector

→ Or the vulnerable feature might be disabled

Example:

CVE-2023-XXXX: PostgreSQL RCE (CVSS 9.8)

- Your environment: PostgreSQL not installed

- Your score: 0.0 (not applicable)

NVD can't know YOUR environment → You must calculate Environmental score3. Scope Changes Cause Confusion

S:C (Scope Changed) adds significant points

But what counts as "scope change" is debatable

Container escape: Obviously S:C

XSS affecting different users: S:C or S:U?

VM escape: Obviously S:C

Vendors sometimes disagree on Scope → Different scores for same vuln4. Doesn't Account for Compensating Controls

WAF blocking exploit → Still CVSS 9.0

MFA preventing credential theft → Still CVSS 7.5

Network segmentation blocking lateral movement → Still CVSS 8.0

CVSS assumes no defenses in place

Real risk is lower if you have defense-in-depthAlternatives and Complements to CVSS

EPSS (Exploit Prediction Scoring System)

EPSS predicts: "What's the probability this CVE will be exploited in the next 30 days?"

Uses machine learning on:

- Exploit availability

- Dark web chatter

- Exploitation trends

- Vulnerability characteristics

Example:

CVE-A: CVSS 9.0, EPSS 0.02 (2% exploitation probability)

CVE-B: CVSS 6.5, EPSS 0.85 (85% exploitation probability)

Which to patch first? CVE-B! (Higher exploitation likelihood)

Use together: CVSS (severity) + EPSS (likelihood) = Better prioritizationSSVC (Stakeholder-Specific Vulnerability Categorization)

Decision-tree approach asking:

1. Is it being exploited? (Active, PoC, None)

2. What's the exposure? (Internet, Controlled, Small)

3. What's the impact? (Total, Partial, Minimal)

4. What's the mission impact? (Mission Failure, Degraded, None)

Output: Act Now, Scheduled, Defer

More context-aware than CVSS

Used by CISA for federal agenciesBest Practices for Using CVSS

1. Don't rely on Base Score alone

Calculate Environmental scores for critical assets

2. Combine with threat intelligence

CVSS 7.0 + active exploitation = Emergency

CVSS 9.0 + no exploit code = High priority (not emergency)

3. Use CVSS as ONE input to prioritization

CVSS + EPSS + Asset Criticality + Compensating Controls = Risk Score

4. Automate scoring where possible

Vulnerability scanners auto-calculate CVSS

CMDB can provide asset criticality

SIEM can detect active exploitation

5. Publish your organization's SLA

Critical (9.0-10.0): Patch within 7 days

High (7.0-8.9): Patch within 30 days

Medium (4.0-6.9): Patch within 90 days

Low (0.1-3.9): Patch when convenient

6. Recognize exceptions

CVSS 5.0 in payment system > CVSS 8.0 in dev lab

Business context overrides CVSSKey Takeaway: CVSS is a common language for severity, not a complete risk assessment. Use it as a starting point, then add organizational context, threat intelligence, and business impact to make informed decisions. No single metric can replace human judgment informed by context.

📚 Building on Prior Knowledge

This week connects to concepts you've already learned:

- CSY101 Week 1 (Risk Formula): Risk = Threat × Vulnerability × Impact. CVSS quantifies Vulnerability severity, but you must add Threat likelihood and Impact to your organization to get true Risk.

- CSY101 Week 1 (CIA Triad): CVSS Impact metrics directly map to CIA: Confidentiality Impact (C), Integrity Impact (I), Availability Impact (A).

- CSY104 Week 1-10 (Network Protocols): Attack Vector (AV) makes sense because you understand Network vs Adjacent vs Local access from your networking knowledge.

- Looking ahead: You'll use CVSS throughout your career:

- CSY201 (SOC Operations): Prioritize vulnerability remediation

- CSY202 (Ethical Hacking): Assess severity of findings

- CSY203 (Web Security): Score web application vulnerabilities

- CSY303 (GRC): Report vulnerability metrics to leadership

- CSY399 (Capstone): Include CVSS scores in assessment reports

🎯 Hands-On Labs (Free & Essential)

Practice CVSS scoring with real CVEs and vulnerability assessment workflows.

🔬 Lab 1: NIST NVD CVSS Calculator

Task: Use NIST's official CVSS v3.1 calculator to score real vulnerabilities.

Calculate Base, Temporal, and Environmental scores for 3 different CVEs.

Specific Requirements:

1. Go to NIST CVSS Calculator

2. Choose 3 CVEs from recent NIST NVD database (search at nvd.nist.gov)

3. For each CVE:

• Read the vulnerability description

• Calculate Base Score (select all metrics)

• Justify each metric choice (why AV:N? why C:H?)

• Compare your score to NVD's published score

• Calculate Environmental score assuming: Critical production system,

internet-facing, high CIA requirements

4. Write 1-2 paragraphs per CVE explaining the scoring rationale

Deliverable: Document with 3 CVE analyses including:

• CVE ID and description

• Your calculated Base Score + vector string

• NVD's published score (compare)

• Environmental score for critical system

• Justification for each metric

Why it matters: Vulnerability assessment reports require CVSS scores.

You must be able to calculate and defend your scoring decisions.

Time estimate: 60-90 minutes

🔬 Lab 2: Vendor Score Comparison

Task: Compare CVSS scores from different sources for the same vulnerability

to understand scoring variation.

Specific Requirements:

1. Choose 2 high-profile CVEs from the past year

2. Find CVSS scores from multiple sources:

• NIST NVD

• Vendor security advisory (Red Hat, Microsoft, etc.)

• CVE Details (cvedetails.com)

• Vulnerability scanner (if accessible - Nessus, Qualys)

3. For each CVE:

• Document all scores found

• Identify differences (do they agree on severity rating?)

• Analyze the vector strings - which metrics differ?

• Explain WHY scores might differ (different interpretations of Scope, Impact,

etc.)

4. Determine which score you trust most and why

Example CVEs to consider:

• Log4Shell (CVE-2021-44228)

• Spring4Shell (CVE-2022-22965)

• Recent Microsoft Exchange vulnerabilities

Deliverable: Comparison table + analysis explaining score variations

Why it matters: Different sources calculate CVSS differently. You must

understand the rationale to make informed decisions.

Time estimate: 45-60 minutes

🔬 Lab 3: Vulnerability Prioritization Exercise

Task: You're a security analyst with 10 vulnerabilities to remediate.

Prioritize them using CVSS + business context.

Scenario: Your vulnerability scan identified these issues:

Vulnerability List:

1. SQL Injection in public web app (Base: 9.8, AV:N/AC:L/PR:N/UI:N/S:U/C:H/I:H/A:H)

• Exploits available (E:F), Patch available (RL:O)

• Asset: Public e-commerce site (CR:H, IR:H, AR:H)

2. RCE in internal file server (Base: 8.0, AV:A/AC:L/PR:L/UI:N/S:U/C:H/I:H/A:H)

• No known exploits (E:U), Patch available (RL:O)

• Asset: Internal document storage (CR:M, IR:L, AR:M)

3. XSS in admin panel (Base: 6.1, AV:N/AC:L/PR:N/UI:R/S:C/C:L/I:L/A:N)

• PoC available (E:P), No patch (RL:U, workaround exists)

• Asset: Admin interface (only 5 admins access) (CR:M, IR:M, AR:L)

4. Privilege escalation on dev server (Base: 7.8,

AV:L/AC:L/PR:L/UI:N/S:U/C:H/I:H/A:H)

• Metasploit module exists (E:H), Patch available (RL:O)

• Asset: Development/test environment (CR:L, IR:L, AR:L)

5. Outdated OpenSSL (no known vulns) (Base: 5.3, Info disclosure)

• No exploits (E:U), Update available (RL:O)

• Asset: Internal API gateway (CR:H, IR:H, AR:H)

6. Unpatched WordPress plugin (Base: 9.0, RCE, AV:N/AC:L/PR:N/UI:N/S:C/C:H/I:H/A:L)

• Active exploitation in wild (E:H), Patch available (RL:O)

• Asset: Marketing blog (CR:L, IR:L, AR:M)

7-10. [Create 3 more realistic vulnerabilities yourself]

Your Task:

1. Calculate Temporal Score for each (Base × Temporal modifiers)

2. Calculate Environmental Score (account for CR/IR/AR)

3. Consider business context:

• Customer data exposure = regulatory fines

• E-commerce downtime = $50k/hour revenue loss

• Internal systems don't face internet

4. Create a prioritized remediation plan (1-10 ranking)

5. Justify your ranking (why is #1 more urgent than #2?)

6. Define SLAs: Which need fixing THIS WEEK? Which can wait?

Deliverable: Prioritization matrix with:

• Base, Temporal, Environmental scores

• Priority ranking (1-10)

• Remediation timeline

• Justification for each decision

Why it matters: Real security work = prioritizing limited resources.

CVSS + context = effective risk management.

Time estimate: 60-90 minutes

💡 Lab Tip: When prioritizing vulnerabilities, ask: "If I can only patch ONE thing this week, which reduces risk the most?" CVSS guides you, but business context makes the final call.

Resources

Official CVSS resources and vulnerability databases.

- CVSS v3.1 Specification (FIRST.org) · Official Standard · 50 XP · Resource ID: csy104_w11_r1 (Required)

- NIST NVD - CVSS Resources & Calculator · Official Tool · 50 XP · Resource ID: csy104_w11_r2 (Required)

- FIRST CVSS v3.1 Calculator · Interactive Tool · 25 XP · Resource ID: csy104_w11_r3 (Optional)

- EPSS - Exploit Prediction Scoring System · Complementary System · 25 XP · Resource ID: csy104_w11_r4 (Optional)

- CISA SSVC - Stakeholder-Specific Vulnerability Categorization · Alternative Framework · 25 XP · Resource ID: csy104_w11_r5 (Optional)

Self-Check Questions

Test your understanding of CVSS scoring and vulnerability assessment.

- What are the three CVSS metric groups? Which is required?

- A vulnerability requires a victim to click a phishing link. What is the User Interaction (UI) metric? How does this affect severity?

- Explain the difference between Attack Vector: Network (AV:N) and Attack Vector: Adjacent (AV:A). Give an example of each.

- What's the difference between CVSS severity and risk? Why might a CVSS 9.8 vulnerability have lower risk than a CVSS 6.0?

- You have 100 vulnerabilities to patch. How would you prioritize using CVSS + business context?

- What is Scope (S) in CVSS? What's an example of S:C (Scope Changed)?

- A vulnerability has Base Score 8.0, but a Metasploit module was released. How does this affect the Temporal Score?

- What is EPSS and how does it complement CVSS?

- Your organization has a CVSS 7.5 vulnerability on an internet-facing payment system. Calculate the Environmental Score assuming CR:H, IR:H, AR:H. What's your patching timeline?

- What are two major limitations of CVSS? How do security teams address them?

Reflection Prompt

Reflection Prompt (300-400 words):

Before this week, you might have seen "CVSS 9.8" in vulnerability reports without understanding how that number was calculated or what it truly means.

Reflect on these questions:

- How has your understanding of vulnerability severity changed? Do you now see CVSS as more nuanced than just "high = urgent"?

- When calculating CVSS scores in the labs, which metrics were hardest to assess? Attack Complexity? Scope? Why?

- How will you use CVSS in your future security work? Will you calculate Environmental scores, or rely on Base scores?

- What surprised you about CVSS limitations? How might you complement CVSS with other prioritization methods (threat intelligence, EPSS, business impact)?

- If you were explaining CVSS to a non-technical manager, how would you describe why a "Critical" vulnerability might not need immediate patching?

A strong reflection will demonstrate understanding of CVSS as a tool (not a mandate), acknowledge its limitations, and articulate how you'll combine quantitative scoring with qualitative judgment to prioritize security work.